Navigating the AI Productivity Paradox

March 7, 2026

There is an underlying anxiety in many leadership discussions today: will AI eventually automate knowledge workers out of existence? The dominant narrative frames AI as a direct replacement for human labor, slowly eroding demand for engineering, product, and data roles. But the macroeconomic data tells a different story. We are not approaching the end of knowledge work. We are standing at the start of its biggest expansion.

The Productivity Paradox

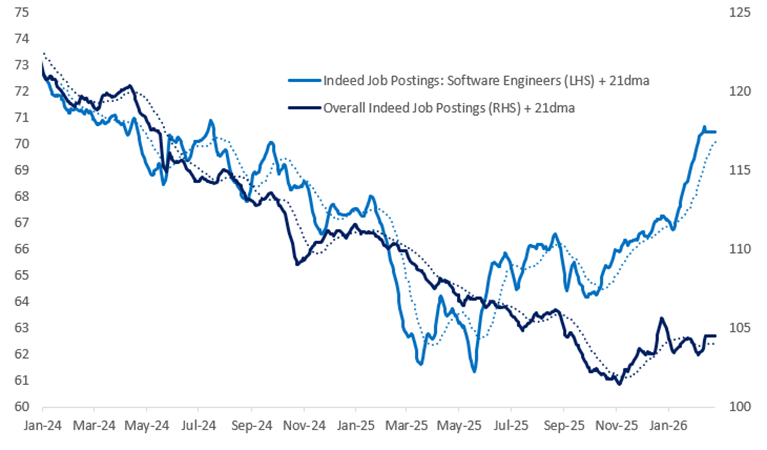

Despite the displacement anxiety, labor market data tells a counterintuitive story. Global job postings for software engineers are rising, up 11% year over year.1 Why? AI is not replacing knowledge work. It is expanding the scope of what teams can build.

As AI strips away the friction of basic coding and data wrangling, teams are not running out of work. They are finally gaining the capacity to build the complex systems customers have always expected but that teams historically lacked the bandwidth to attempt.

Jevons Paradox in Knowledge Work

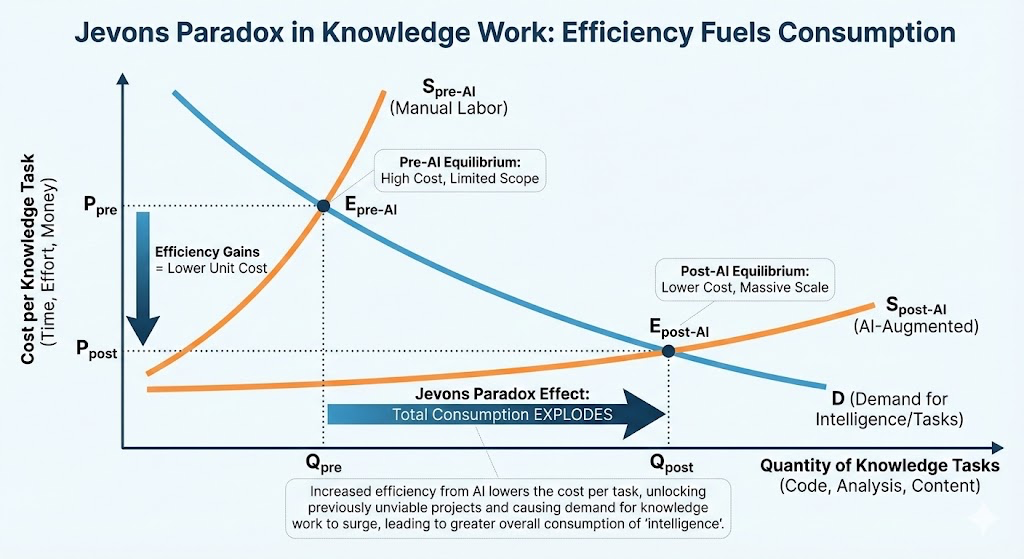

To understand where this goes, it helps to look at a 160-year-old economic concept. In the 19th century, William Stanley Jevons noticed a counterintuitive trend: as steam engines became more efficient at using coal, coal consumption did not drop. It skyrocketed. Making the resource cheaper and more efficient unlocked entirely new, previously unimaginable uses for it.

The same pattern is playing out with cognitive labor. AI lowers the time cost of writing boilerplate code, querying a database, or drafting technical documentation.

“Jevons paradox strikes again! As AI gets more efficient and accessible, we will see its use skyrocket, turning it into a commodity we just can’t get enough of.”

Satya Nadella, Microsoft CEO2

When the cost of execution drops, the demand for complex problem-solving surges. Projects that were permanently stuck in the backlog suddenly become viable.

The Rise of the Systems Thinker

To capitalize on this paradox, the nature of knowledge work must shift. The future belongs not to the fastest manual coders, but to the sharpest systems thinkers.

Think of an AI agent as a tireless junior developer. If AI handles the basic integrations, the human role shifts to architecture. The questions change: How do these systems interact? Where are the edge cases? Does this hold at scale? The value of a team is shifting from manual code production to the strategic orchestration of intelligent systems.

This shift runs deeper than delegation. Grady Booch, one of software engineering’s most respected voices, describes the current moment as the “third golden age of software engineering,” where the discipline moves from writing algorithms to reasoning about systems at a higher order of abstraction. Typing precisely is useful. Thinking clearly about systems is what wins.

In this paradigm, the skill that matters is not knowing how to write a data pipeline. It is understanding why a specific architecture matters in a specific organizational context. When AI handles execution, the human role becomes meta-design: defining how data flows between systems, which services need to talk to each other, where the failure points live, and what happens at the edges that agents were not built to handle.

Deloitte’s 2026 technology predictions go as far as naming the AI agent orchestrator one of the most important emerging roles in enterprise technology: not just a technical function, but a strategic one. The firms that pull ahead will not be those with the most AI tools. They will be those with people who understand how complex systems need to work together: the data contracts between services, the organizational handoffs between teams, and the business logic that no model can infer from the code alone.

That understanding is inseparable from context. Knowing that a loyalty platform and a CRM system need to share a customer identity is a technical requirement. Knowing why they currently do not, which team owns each, what a migration would cost politically and technically, and which sequence of changes will actually get approved. That is systems thinking applied to the real world. It is the kind of knowledge that compounds over years and cannot be generated on demand.

The Collapse of the Entry Level Role

We must also be intellectually honest about the painful side of this transition. While demand for high-level architectural work is growing, the bottom rungs of the traditional career ladder are eroding.

The Divergence in Tech Hiring (2025-2026)3

| Role Level | AI Exposure Impact | Hiring Trend |

|---|---|---|

| Entry-Level (Junior/P1) | High (routine, codified tasks) | -73.4% |

| Early-Career (Ages 22-25) | High | -13.0% |

| Senior/Architect | Low (tacit knowledge, strategy) | +11.0% |

This presents a structural challenge for the industry. If AI automates the intellectually mundane work, the routine bug fixes and basic reporting that traditionally served as the apprenticeship phase, how does the industry train the next generation of senior leaders? Early-career roles need deliberate redesign, bypassing repetitive drudgery and training junior practitioners directly on judgment, AI tooling, and strategic delegation.

Why Context Is Everything

Human roles will endure because of the gap between codified tasks and tacit knowledge. AI excels at the codified: rules-based, predictable work that can be written in a manual.

But tacit knowledge is where AI breaks down. It does not understand a brand’s accumulated voice and history. It cannot navigate the cross-functional stakeholder dynamics required to launch a new initiative. It does not know why a specific message resonates with one customer segment and falls flat with another.

I have led large-scale martech programs across enterprise organizations, scaling teams responsible for platforms that serve millions of consumers. The hardest problems were never technical. They were organizational. Which team owns the customer identity graph? How do you align a loyalty platform, a CRM system, and a digital acquisition stack that three different teams built over a decade? How do you get an executive to deprioritize a legacy system they championed?

No model answers those questions. The answers live in institutional memory, in the relationships between teams, in the unwritten rules of how decisions actually get made. That kind of context takes months to develop and cannot be prompted into existence.

This is where experienced practitioners retain their edge. Not in the ability to build the pipeline, but in knowing which pipeline matters, who has to approve it, and what is actually blocking it. Execution is commoditizing. That judgment is not.

Conclusion

We are not competing against AI. We are competing against teams that know how to use it better. The leaders who embrace the Jevons Paradox will stop treating automation as a threat and start using it as leverage. They will use AI to clear away low-value work, freeing up capacity for the problems that actually require human judgment.

The question worth sitting with: If AI can handle the bottom 40% of your daily workload, what higher-order problems will you finally have the bandwidth to tackle?

Footnotes

-

Indeed job postings data via Citadel Securities, 2025. ↩

-

Nadella, S. (2025). Remarks on AI efficiency and market demand, originally cited in NPR’s Planet Money: Why the AI world is suddenly obsessed with Jevons paradox. ↩

-

QUASA Connect (2026). The 73% Collapse: How AI Is Erasing Entry-Level Tech Jobs and Rewriting the Career Ladder. ↩